Demand Modeling Explained - Part 1: Why Demand Modeling

The origins of demand modeling can be traced back to the 1970's. At the time at MIT's Draper Labs in Cambridge Massachusetts research was being conducted into new techniques to improve forecast accuracy especially for slow-moving items. One of the key players in that early research was Mr. Eugenio Cornacchia who went on to improve on the early techniques and at some point, in 1993, co-founded the company ToolsGroup around the concepts of demand modeling and one of its primary uses, inventory optimization.

The research into demand modeling was certainly not the only research being conducted into improving forecast accuracy around that time. Methods like Croston's and Box-Jenkins are examples of techniques developed in the same era that had similar objectives. The former even to address the very same issue: to improve on accuracy for slow-moving items. The driver for all this research was the very acute need to improve on the existing forecasting approaches, which were highly unreliable except maybe for the fastest moving items at aggregate levels. Most of that research (at least most that survived its infancy) was evolutionary in nature: many small - and a few not so small - steps accumulating to significant overall improvement, but never breaking the old mold. And although the amount of research in this area seems to have slowed down, or at least is not producing as many publicized improvements, it still continues to this day. The need to improve forecast accuracy has not ceased to exist since the very first forecasts were generated.

The "why" of demand modeling back in the day of its inception can be placed squarely in that corner too. Forecast accuracy was its objective then. It was also one of very few truly revolutionary approaches that seem to have survived. Others may have existed, but if so did not pass the test of time. Other drastically different approaches that did survive have typically all fallen into the category of black-box systems. In certain environments these would improve on the accuracy over traditional approaches, but at the expense of insight into how and why the results were as they turned out and very often also at the expense of determinism (if you run the same series 10 times, you get 10 different answers) which caused a lot of distrust into these systems and explains their lack of adoption. Their objective was the same as all the other approaches: forecast accuracy - a real pain that needed real relieve.

In the early days the focus of demand modeling was on slow-moving items, since those were suffering from much worse forecast errors than their faster moving siblings. However, the algorithm that was developed turned out to be more accurate for the fastest moving items too, and everything in between. So, rather than becoming yet another option to add to the growing list of algorithms to be weighed against each other, this allowed the demand modeling algorithm to stand alone. Once released from the shackles that bound all those other algorithms it could evolve in a different direction and at a different speed from the others.

So this little background explains in a nutshell the state of demand forecasting back when, and why demand modeling was originally conceived. But what about the "why" we are really interested in?

Why demand modeling today?

Let's start with the point of view of what the approach aims to achieve, then list some of its characteristics, to finally summarize what this means for the company implementing or considering such approaches.

Today, forecast accuracy, or more broadly forecast reliability, is still a primary objective of demand modeling. However, many other merits have been discovered over time, and have grown into the fabric of demand modeling as it has evolved. Like many great inventions, some of these merits may have been discovered accidentally, or as an unanticipated side-effect.

Two such additional benefits, turned objectives, are more reliable inventory optimization to drive service, and minimization of the bullwhip effect. In my personal opinion these are even more valuable than forecast accuracy since these directly impact the business, its profitability and stability, whereas forecast accuracy only does so indirectly. On the topic of how demand modeling drives reliability in inventory optimization I aim to write one or more detailed articles some time in the near future. On the topic of the bullwhip effect I have already blogged at length. I do not intend to spend more time on these topics in this current series.

As indicated in the introduction, demand modeling nowadays is no longer just for the slow moving items. First companies implementing demand modeling were typically in the areas of spare parts or maintenance, repair and overhaul (MRO), but some of the big success stories today are among Fortune 500 fast-moving consumer goods (FMCG) companies. If all anybody cared about was forecasts at aggregate levels (say monthly by SKU and country) there would be a small, but nevertheless real, benefit in using demand modeling over alternative approaches even for FMCG companies.

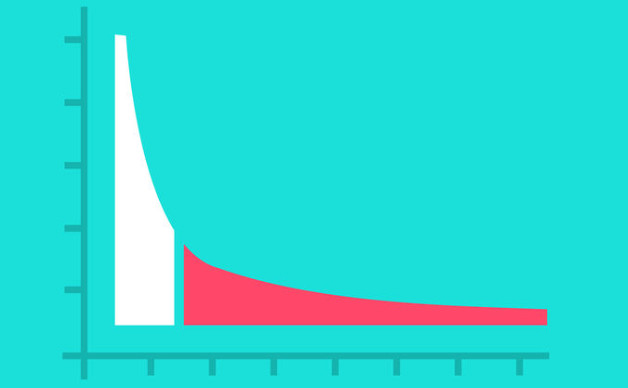

The big realization comes only after you accept that forecasts have very little tactical or operational value at those aggregate levels. When you need to ship product to a customer, it is never sufficient to know which month and which country (nor even which specific account). The shipment needs to go to a specific location by a specific date. The inventory needs to be available from a specific source location by another specific date. The forecast had better been accurate for that specific location and specific date. Having it on the other side of the country or at a different time of the month is no help at all. What we've found in benchmarks is that the biggest errors by far in the whole forecasting process are introduced during the splitting of aggregate values down to the level where replenishment and inventory are planned. Typical deterioration is 40%-50% increase in MAPE when splitting monthly forecasts into weekly forecasts, and another 40%-50% increase in MAPE when splitting national forecast down to ship-from DC. A forecast with 90% accuracy at month/SKU/Nation level will rarely achieve more than a meager 25% accuracy after being split down to week/SKU/DC. And this is using the most advanced splitting algorithms boasted by vendors like SAP, Oracle and JDA. Frankly, why even bother putting any effort in forecasting if you cannot materialize it.

To be clear, this is an argument in favor of bottom-up approaches to forecasting in general, not demand modeling specifically. All bottom-up approaches avoid the need of any splitting mechanisms on the statistical forecast, with all their inherent assumptions and approximations. Note that splitting in such cases may still be needed if humans make adjustments of demand quantities at aggregate levels, but in that scenario the ratios to use when splitting can be based on the much more accurate understanding of the forecast at the detail level rather than some arbitrary rules. Demand modeling goes one step further still, removing the need for humans to make adjustments to demand quantities at any level, and thus the need to split at all. Such input, although not strictly needed nor typically even beneficial, could still be entered if the implementing company really wants to and is then split using the same lossless approach that other bottom-up systems use.

Notice the word "after" two paragraphs up. After you accept the reduced value of aggregate forecasts, the real realization is that once you look at time series at more granular levels, that even fast-moving items start showing intermittent patterns, normally associated with slow movers. So algorithms that are strong for intermittent demand gain a lot of ground on those that are only strong for fast-moving items. Add to this the market trends of globalization and product proliferation, both of which cause fragmentation and even more intermittent patterns. Even the fastest moving items in the fastest moving industries will under these current circumstances show intermittency in some of the locations where they are shipped or sold. This is a main reason why a technique that was conceived specifically for slow-moving environments is not only strong in fast-moving environments but keeps on getting stronger and stronger. This is especially true in comparison to the alternatives, which are finding the portion of the domain they perform well in to be continually shrinking.

One of the consequences of the fragmentation that I have seen in the market is that companies using traditional forecasting approaches qualify a portion of their portfolio as simply "unforecastable". These are items or item/locations for which they concede that it is no use forecasting them since it is "impossible" or does not add value. As a result this entire portion of their portfolio is under-performing, frequently losing the company money. And the trend seems to be that the size of this portion is increasing. In reality, there is no such thing as "unforecastable". There are merely forecasting approaches that are not up to the task. It is true that some items or item/locations are less forecastable than others, which means you will need to buffer those with more inventory or capacity relative to the demand quantities. In a majority of cases there is still positive value to be generated from those. You will need a reliable forecast in a very specific output format to not only understand where that value is to be found, but also to obtain it. I will explain why and how demand modeling accomplishes this in detail in future blog posts, including the next two parts of the current series. For those cases for which no value is to be found the same understanding will help determine which items to drop or start making to order only. Even in those cases where a product cannot drive direct value, but also cannot be dropped due to SLA's or other reasons the same understanding will help minimize the impact. In all of these cases rough rules of thumb will cause significant loss of value and erosion of overall margins.

You would not be far from the truth if from the above you would determine that demand modeling is based on a single algorithm. More accurately though modern-day demand modeling is based on a single model, which generates a single algorithm custom fit to each implementation, and dynamically adjusting as the environment requires. But more on that later. For now, let's assume effectively there is a single algorithm.

The last three major benefits of demand modeling that I wish to highlight at this point are in large part due to this effective single algorithm. The first of these is a greatly reduced burden on the planners, allowing a much larger portfolio to be effectively and efficiently managed by a team of planners that would otherwise only be able to focus on a few key customers and a few key products to the exclusion of everything else. This is due to three reasons: a more accurate forecast requiring less intervention; a highly reliable exception management allowing planners to laser focus on real exceptions and only real exceptions; and last but not least no need to ever second-guess the appropriate selection of the algorithm, since there is only one (and good luck to you if you want to try and outperform that one).

The second major benefit of a single algorithm is plain performance. By not having to fit 20 to 30 different algorithms, then having to determine which one of those yields the best result quite a bit of waste can be eliminated from the forecasting process. Try forecasting 50 million active SKU/locations in daily time granularity every night in under an hour with any traditional forecasting approach. Try supporting that many items with just 4 planners and 1 backup each. That kind of scalability can only be achieved with a single algorithm.

The third benefit of a single algorithm is a very smooth transition across all phases of a product's life cycle. There is no need to have one algorithm for introduction, another for main life phase, and yet another for transition to retirement, with all the jerky switch-overs that go along with that. The demand patterns smoothly progress along the life cycle's continuum, all on auto-pilot.

As a supply chain company what does all of this mean for me?

Let me sum it up: completeness, accuracy, scalability, and human capital.

Demand modeling provides a means to generate a forecast for every single one of your SKU/locations. No exclusions. No items that are "unforecastable". Every item generates the value it is capable of.

The accuracy of the forecasts it generates is unparalleled across the entire spectrum of demand patterns and across every product's entire life cycle. Compared to best-in-class traditional forecasting the relative improvement may range from a modest few percent for the fastest moving items at high levels of aggregation up to 80% for the slowest moving items at operational level of detail.

The efficiency of the approach allows much larger numbers of items to be forecasted with the same hardware and with the same number of planners. And these planners do not need to be super-humans as they need to be with traditional forecasting. No need for large numbers of people that have both PhD's in statistics as well as people skills and influencing skills, which are hard to find and even harder to hold on to, since they are practically irreplaceable and are rarely allowed to grow into new roles. Demand modeling allows you to find planners more easily and provide them a career path just like any other employee.

Beginning with the next part, I will start explaining the concepts and the technical underpinnings of demand modeling.